Cyber-physical systems are complex and autonomous ensembles of different components that directly cooperate to offer smart and adaptive functionalities. They are composed of sensors, actuators, and processing components that are deeply entangled: they constantly exchange information and actively interact with the external environment. So they are increasingly used in a variety of applications with a growing market. CPS summer school was targeted at students, research scientists, and R&D experts from academia and industry, who wanted to learn about CPS engineering and applications.

ALOHA participated through several actions at the 3rd edition of the CPS School (Alghero, Italy, September 23-27, 2019).

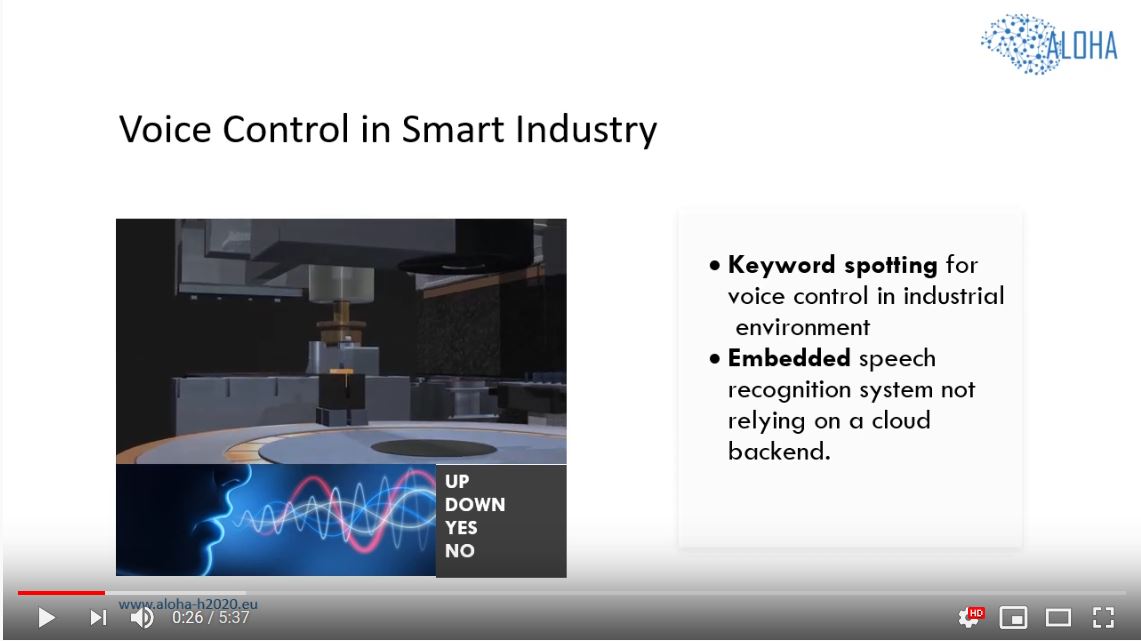

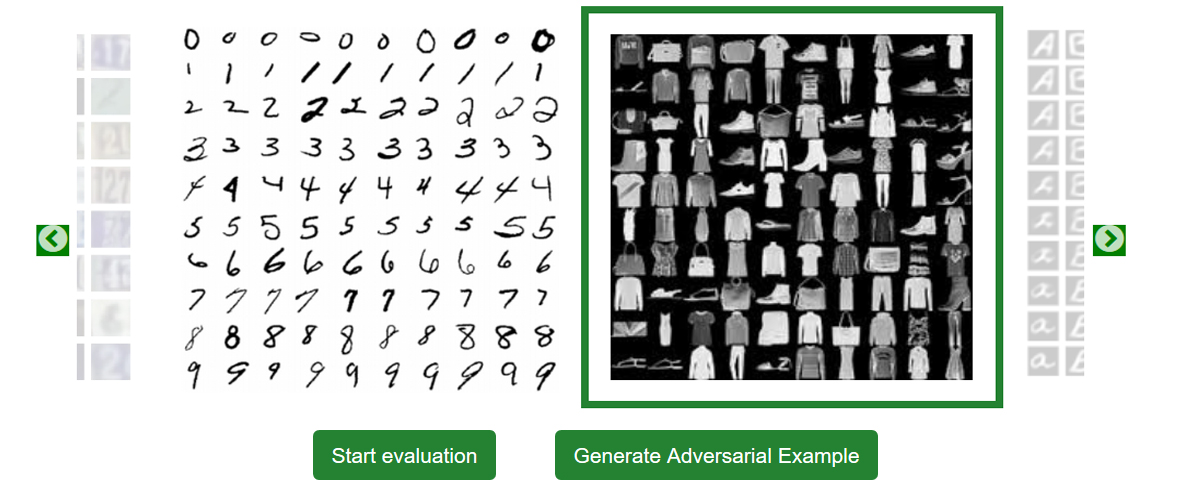

On September 25th, day of the school dedicated to “Modelling and Programming”, a session was dedicated to a practical Tutorial on the technologies developed in the context of the ALOHA Project.

Read more and see the pictures...